Christ and other geology department colleagues were sorting through sediment from the core sample, washing it off before the next stage of analysis, when he noted peculiar black specks floating in the water.

He collected a few and put them under the microscope for a better look.

“Oh my God, these are plants,” he remembers exclaiming.

“I went full-on mad scientist.”

After his initial giddiness, the significance of the specks sank in.

Christ, the lead author on a

paper published this month in Proceedings of the National Academy of Sciences, had found in the sediment “freeze-dried fossils” and other direct evidence that Greenland was ice-free in the last million years.

The finding is more than an academic curiosity: It has

direct implications for our future.

“It’s not if Greenland is melting, but how fast,” says Joerg Schaefer, a coauthor and climate geochemist at Columbia University’s Lamont-Doherty Earth Observatory.

Together with a sample from central Greenland that he and colleagues analyzed

in 2016, he says, the Camp Century material shows that “there is no question: Greenland is an unstable ice sheet.”

For Schaefer, analyzing the Camp Century subglacial sample after it languished for more than half a century is a thrill, even though his team’s results are bad news.

“As a scientist, it’s exciting,” he says.

“As a citizen of the planet, it’s horrifying.”

Researchers had long thought that Greenland’s ice sheet, more than 2 miles thick in places, was essentially permanent and had blanketed the island for more than 2 million years.

The subglacial sample confirms the massive ice sheet can probably melt far more easily than most models suggest, which would dump enough water into the oceans to raise sea levels by up to 20 feet, all but wiping major cities like London and Boston off the map.

“This study is very important.

It shows the Greenland Ice Sheet can disappear with the kind of climate warming we’re projecting over the next century,” says William Colgan, a climatologist for the Geological Survey of Denmark and Greenland who was not involved in the research.

Earth’s polar regions are warming much faster than the rest of the planet, with most models suggesting a rise of at least 14 degrees Fahrenheit (more than 8 degrees Celsius) in the next century.

Together with the 2016 analysis, the new Camp Century paper shows that such a temperature bump is enough to melt the ice sheet and cause catastrophic sea rise.

“The Greenland Ice Sheet can disappear,” says Colgan.

“It is remarkably climate-sensitive.”

The Camp Century sample’s role in rethinking the impact of climate change is just the latest twist in its strange history.

In 1959, the American army set up Camp Century in northwestern Greenland, ostensibly for scientific research.

The site’s true purpose, however, was Project Iceworm: a secret Cold War plan to build hundreds of miles of tunnels about 25 feet into the ice to store nuclear missiles within striking range of the Soviet Union.

The secret military plan never happened—engineers quickly learned how rapidly and unpredictably the ice can shift, making the site highly unstable and wholly unsuitable for nuclear weapons.

Colgan, the project manager for the

Camp Century Climate Monitoring Program, is one of a handful of people who have been to the site of the former Army installation, now buried under more than 100 feet of accumulated snow and ice.

“The tunnels are collapsed and compressed,” he says.

“The snow has turned to ice with pancakes of debris.”

Camp Century was abandoned in 1967, just a year after its engineers managed a true scientific feat: drilling the first ice cores.

Together with more recent cores from Antarctica and elsewhere in Greenland, these slim cylinders of ice provide a crucial record of ancient climate conditions that researchers have since used both to understand our past and model our future.

Colgan says Camp Century has been invaluable for science, now more than ever.

“Camp Century was the first ice core program, and we’re still learning from it,” Colgan says, adding that the Cold War–era team probably realized the site’s unsuitability as a missile base very early in their work, but persevered in the name of science.

The subglacial sample, he says, “only exists because they wouldn’t take no for an answer.

They punched all the way into the bedrock and even then kept going.”

Some of the mile-long Camp Century ice core had been previously studied.

After being collected in 1966, however, the subglacial core sample—about 12 feet of frozen mud and bedrock from below the ice—was stored in an Army lab freezer, then at the University of Buffalo.

The sample was eventually sent to Denmark, where it languished yet again, at the University of Copenhagen’s ice core archive.

In 2017, as staff prepared to upgrade the facility, someone noticed unopened boxes of Camp Century core samples.

Inside, rather than the slim cylinders typical of ice cores, they found glass jars of subglacial rock and clumps of frozen sediment.

Almost immediately, the find became a sensation in the field.

Getting a comparable subglacial sample today using modern drilling technology would have been prohibitively expensive.

“We knew how important these samples would be.

All of us started shaking and even drooling a bit,” says Schaefer.

As word of the samples spread, he flew to Copenhagen with University of Vermont geologist Paul Bierman in hopes of negotiating for some of the material.

“We were trying not to let them see how excited we were. We just tried to keep it together.”

Subglacial material, collected from where the drill hit sediment and bedrock below the ice sheet, contains information the ice does not.

Exposed rock, like everything else on Earth’s surface, gets bombarded with cosmic rays, producing chemical signatures, called cosmogenic nuclides, that can be used to establish whether, and when, an area was ice-free.

“The nuclides are only produced if the rock sees open sky,” Schaefer says.

The work of dating the material is “really, really hard,” says Colgan, but the Camp Century sample has been initially dated, with confidence, as less than a million years old, lining up with the previously studied sample from central Greenland.

Christ, Schaefer, and their colleagues continue to analyze the Camp Century material to narrow its age range and learn more about the plant material it preserved, which is unique, since massive ice deposits usually destroy organic material.

The next phase of research, already underway, includes searching for traces of DNA that could be used to determine the species present, and even reconstruct the entire ecosystem.

So far it appears similar to modern Arctic tundra.

There’s yet more to the Camp Century core to explore.

The very bottom layers of the sample include sediment that may be up to 3 million years old, Christ says, and may include more organic matter that could be “the oldest material ever recovered from under the ice.”

Camp Century may never have hosted nuclear weapons, but it is proving to be far more significant than even its planners imagined.

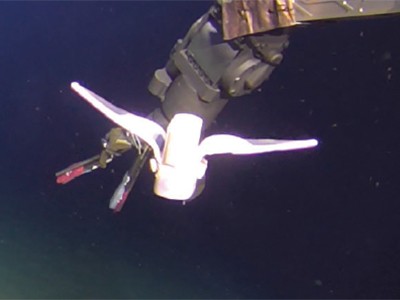

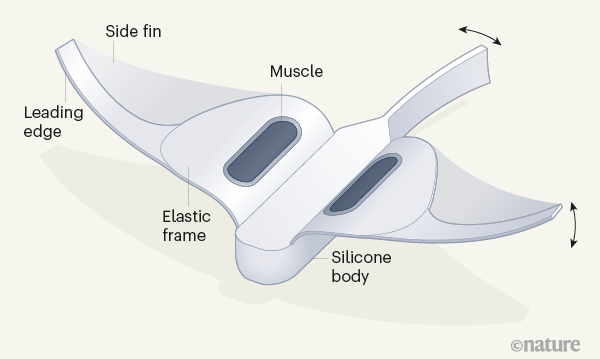

Read the paper: Self-powered soft robot in the Mariana Trench

Read the paper: Self-powered soft robot in the Mariana Trench

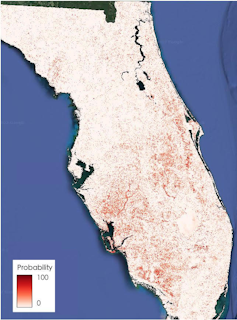

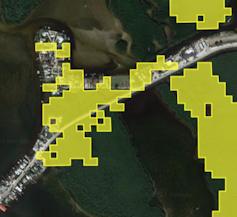

Punta Gorda, Florida, was hit by storm surge and high winds from Hurricane Ian.

Punta Gorda, Florida, was hit by storm surge and high winds from Hurricane Ian.