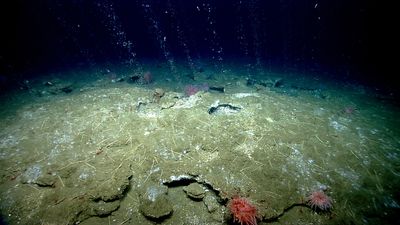

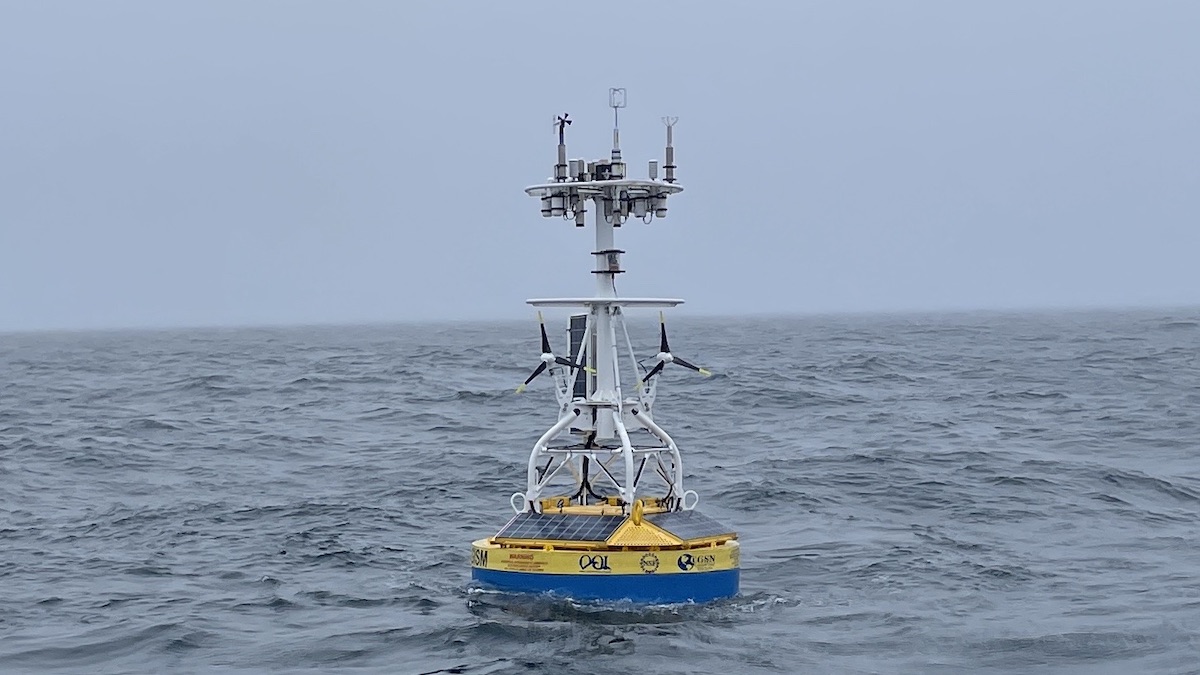

One of our first big milestones — and we mean big — came with Ocean-2, a full-scale prototype deployed off the Washington coast, built by our team of inventors, programmers, welders, physicists, and engineers.

Panthalassa is pioneering a new kind of data centre which floats in the

deep ocean, powered by wave energy and connected by Starlink satellites.

Credit: Panthalassa

From Sustainability Mag by Charlie King

Panthalassa is pioneering a new kind of data centre which floats in the deep ocean, powered by wave energy and connected by Starlink satellites.

Panthalassa is pioneering a new kind of data centre which floats in the deep ocean, powered by wave energy and connected by Starlink satellites.

AI’s appetite for energy is pushing investment into unchartered territory, with US start-up Panthalassa attracting millions for its floating data centres

If proof were needed that necessity drives innovation, the race to power AI has made the case undeniable.

Since the artificial intelligence boom accelerated in 2022, one of the defining challenges for the global economy has been how to supply the vast amounts of energy the technology demands. Companies across the world are now competing to develop viable solutions.

It is widely recognised that AI systems – along with the data centres that support them – consume enormous quantities of energy.

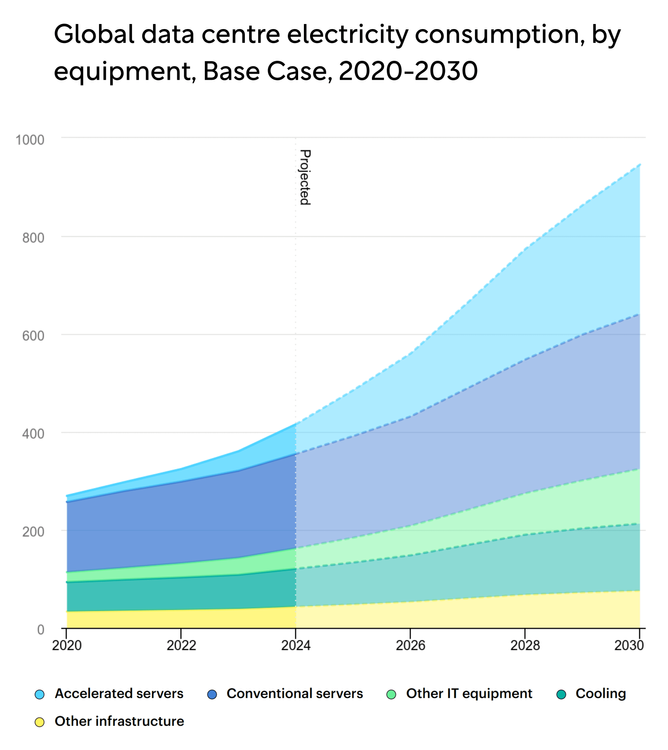

Indeed, the IEA projects that the sector’s energy consumption will rise by 30% annually through to 2030, when AI is expected to account for 3% of global energy use.

This surge in demand has triggered a wave of unusual and inventive approaches, from restarting nuclear facilities to exploring satellite-based solar power and even investing in fusion.

The IEA's projections for data centre energy consumption. Credit: IEA

Among the more unconventional ideas gaining traction is wave energy – a frequently overlooked renewable resource.

Panthalassa, an Oregon-based start-up that has spent the past decade refining its wave energy technology, has recently positioned itself at the forefront of this space.

The company takes its name from the “superocean” that once surrounded Pangea before the continents formed as we know them today – a fitting reference for a business built on harnessing ocean power.

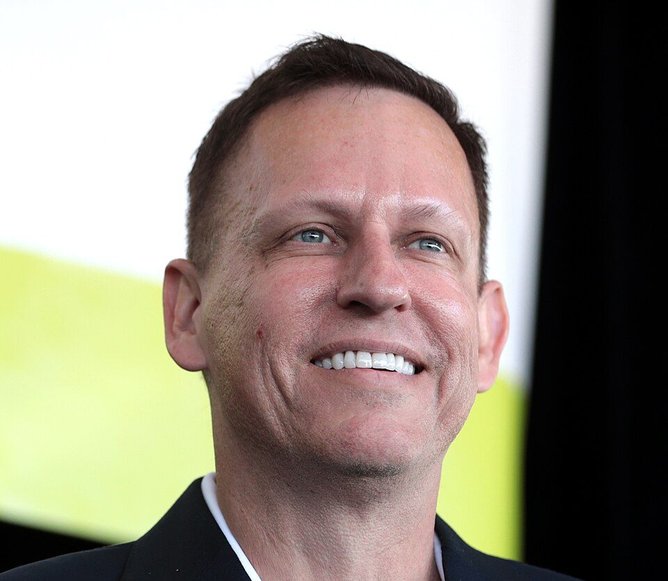

Earlier this year, Panthalassa raised US$140m in a funding round led by venture capitalist Peter Thiel, known for backing Palantir and PayPal.

In just 10 years, Panthalassa has achieved a valuation of US$1bn.

Credit: Panthalassa

The strength of investor interest has pushed the company’s valuation to nearly US$1bn, including the new capital.

Alongside Peter, the round attracted high-profile supporters such as Salesforce CEO Marc Benioff, PayPal and Affirm Co-Founder Max Levchin, and veteran investor John Doerr, an early backer of Google, Amazon, Uber and Netscape.

Peter Thiel, Co-Founder of Palantir and PayPal.

Credit: Gage Skidmore

How Panthalassa’s floating data centres work

From an engineering standpoint, Panthalassa’s approach is intentionally simple.

Its “nodes” are 85-metre-long steel structures – roughly the height of London’s Big Ben – designed to sit mostly below the ocean surface.

As waves move the structure, water is forced through a turbine, generating electricity that powers AI chips housed within a sealed, seawater-cooled container.

Manufacturing the Panthalassa nodes. Credit: Panthalassa

Manufacturing the Panthalassa nodes. Credit: PanthalassaThe system avoids complexity: there are no hinges, flaps or gearboxes, and few components are exposed to the harsh realities of open-ocean conditions.

Each node continuously recirculates water internally to drive its generator, producing no direct emissions and requiring no engine.

Once towed out to sea horizontally, the structure rotates upright and travels to its designated location using only the hydrodynamic properties of its hull.

Panthalassa's wave-powered data centres do not require any connection to land. Credit: Panthalassa

Panthalassa's wave-powered data centres do not require any connection to land. Credit: PanthalassaBecause these floating data centres operate far offshore, all AI queries are transmitted via SpaceX’s Starlink satellite network.

According to the company, this satellite link is the only land-based connection the system requires.

The energy argument

Panthalassa Co-Founder and CEO Garth Sheldon-Coulson, formerly an AI and energy researcher at hedge fund Bridgewater, has been a vocal advocate for wave energy’s potential.

"Energy from open-ocean waves is low-cost, sustainable, abundant and now we have the technology to make it accessible for people," he recently told the Financial Times.

By positioning its nodes in remote deep-ocean locations rather than near coastlines, Panthalassa aims to avoid the limitations that have hindered earlier wave energy projects, such as grid connections and inconsistent wave conditions.

Garth Sheldon-Coulson, Panthalassa's Co-Founder and CEO.

Credit: Garth Sheldon-Coulson

"The waves are like a battery for sunlight and we can be capturing from it 24/7," he adds.

The company argues that wave and wind power, alongside solar and nuclear, are among the few clean energy sources capable of generating “tens of terawatts” – the level of output increasingly required by AI infrastructure.

With global demand for clean energy accelerating, this proposition is likely to attract attention from both public- and private-sector stakeholders.

‘Go where the energy is’

A defining feature of Panthalassa’s model sets it apart from traditional offshore energy projects: it does not aim to transmit electricity back to land.

Panthalassa's motto is "go where the energy is". Credit: Panthalassa

Panthalassa's motto is "go where the energy is". Credit: PanthalassaIts guiding principle – “go where the energy is” – reflects this strategy.

"One of the key insights that we had was that it's very important to use the electricity in place," Garth Sheldon-Coulson explained to the FT.

This approach eliminates one of the biggest challenges in offshore renewables: the cost and complexity of subsea transmission cables and interconnectors.

It also shifts the company’s business model. Rather than selling electricity, Panthalassa sells compute capacity, simplifying its commercial proposition in several respects.

Panthalassa’s plans for the future

The newly secured funding will support the development of a pilot manufacturing facility in the US, with initial commercial deployments expected as early as next year.

We’re

heading to the middle of the ocean, the planet’s most energy-dense

resource, to harness the cleanest, cheapest energy to meet humanity’s

growing needs.

The company also maintains that its supply chains are sufficiently resilient to support rapid scaling.

Peter, who has long supported the libertarian “seasteading” movement advocating for floating communities in international waters and has backed Panthalassa since 2018 through Founders Fund, views the company’s work as part of a broader expansion of technological frontiers.

Panthalassa says its technology only relies on abundant materials and minerals. Credit: Panthalassa

Panthalassa says its technology only relies on abundant materials and minerals. Credit: Panthalassa"The future demands more compute than we can imagine. Extraterrestrial solutions are no longer science fiction. Panthalassa has opened the ocean frontier," he told the FT.

While some critics have labelled such ideas as a form of “tech neocolonialism”, Peter’s endorsement is likely to accelerate Panthalassa’s path toward mainstream adoption.

Remote control centre for USV.

Remote control centre for USV. Frontispiece of 'De groote lichtende ofte vyerighe colom' showing the state of the art of navigator education in the 17th century.

Frontispiece of 'De groote lichtende ofte vyerighe colom' showing the state of the art of navigator education in the 17th century. Late 19th-century survey sextant.

Late 19th-century survey sextant.

Radio acoustic ranging principle.

Radio acoustic ranging principle.  Crew at work on a survey launch in 1969.

Crew at work on a survey launch in 1969.